Returns are an unavoidable part of D2C commerce in India, but they are also one of the least understood sources of margin leakage. As volumes scale, teams often sense that a subset of returns feels “off” — frequent refunds, mismatched items, serial COD refusals, or customers who repeatedly exploit lenient policies. Yet most brands respond with blunt controls: tightening return windows, increasing checks for everyone, or pushing costs onto genuine customers.

Scoring returns for fraud risk: a hybrid rules + behavioural approach explains why this binary way of thinking no longer works. Return fraud is not a yes-or-no problem. It exists on a spectrum, and treating every return as equally risky either increases operational cost or damages customer experience.

This blog outlines how D2C brands can combine deterministic rules with behavioural signals to score return risk at an order and customer level. The focus is on building a practical, explainable scoring system that ops, CX and finance teams can trust and use to reduce fraud without penalising honest customers.

Why does return fraud increase as D2C brands scale?

Volume, policy generosity and weak signals create exploitable gaps

Return fraud rarely spikes overnight. It grows quietly as brands optimise for growth and convenience.

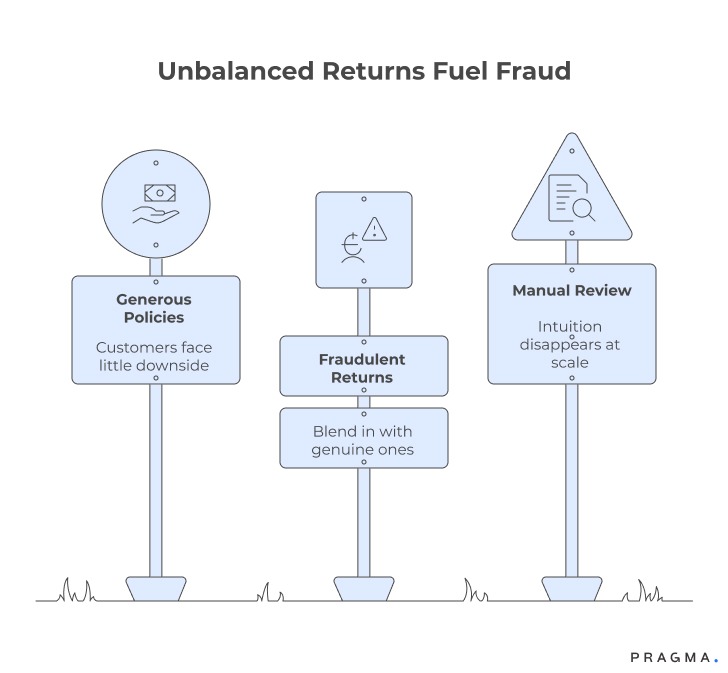

Generous policies create asymmetry

Easy returns and instant refunds improve conversion, but they also create an imbalance: customers face little downside, while brands absorb cost and uncertainty.

Fraud hides within legitimate behaviour

Most fraudulent returns resemble genuine ones — late claims, item condition disputes, partial returns. Without deeper signals, they blend in.

Manual review does not scale

At low volumes, CX agents spot patterns informally. At scale, this intuition disappears, and reviews become inconsistent or skipped entirely.

Fraud thrives where signals are fragmented and decisions are binary.

What does “return fraud” actually look like in practice?

Patterns are subtle, repetitive and behaviour-driven

Return fraud is rarely dramatic. It is cumulative.

Common fraud patterns seen in Indian D2C

- Repeated “item damaged” claims with no images

- Frequent returns clustered around COD orders

- Switching items before return pickup

- High-value returns followed by low engagement

- Multiple accounts using similar addresses or numbers

Why intent is hard to prove

Individually, these behaviours are explainable. Collectively, they form patterns that indicate elevated risk — not certainty.

This is why scoring matters more than classification.

Why is a binary fraud decision the wrong approach?

Risk exists on a gradient, not as a label

Binary decisions force teams into extremes.

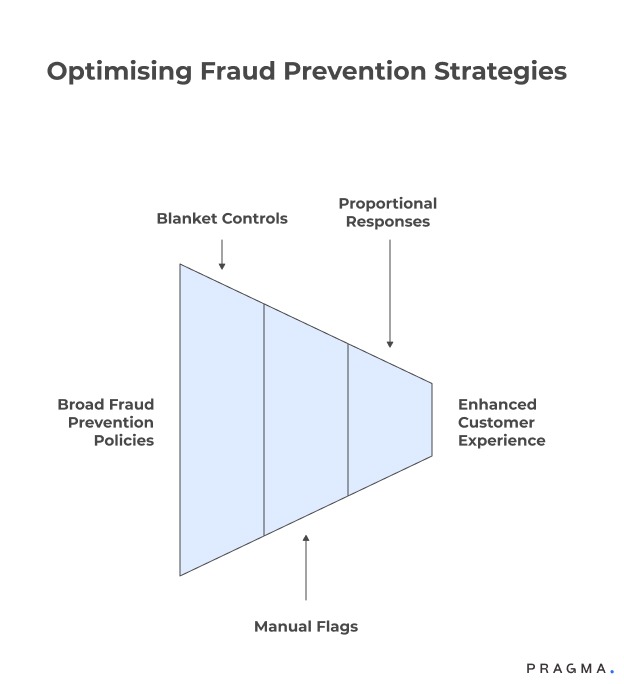

Blanket controls punish good customers

Tightening policies for everyone increases friction, reduces trust and impacts repeat rates.

Over-reliance on manual flags introduces bias

Different agents apply rules differently. Outcomes vary by shift, mood or workload.

Fraud prevention must be proportional

A ₹700 T-shirt return and a ₹12,000 electronics return should not be treated the same — even if the signals look similar.

A scoring model enables proportional responses.

What is a hybrid rules + behavioural fraud scoring approach?

Deterministic guardrails combined with probabilistic signals

A hybrid approach blends certainty with pattern recognition.

Rules establish non-negotiable boundaries

Rules handle known, high-confidence risk.

Examples of rules

- Return initiated after policy window

- Mismatch between SKU shipped and SKU returned

- Blacklisted phone numbers or addresses

- Excessive returns within a fixed time period

Rules are explainable and enforce policy consistently.

Behavioural signals capture emerging risk

Behavioural signals identify suspicious patterns that rules alone cannot catch.

Examples of behavioural signals

- Increasing return frequency over time

- Higher return rates on specific categories

- Declining engagement post-refund

- Repeated address-level returns across accounts

Behavioural signals adapt as behaviour evolves.

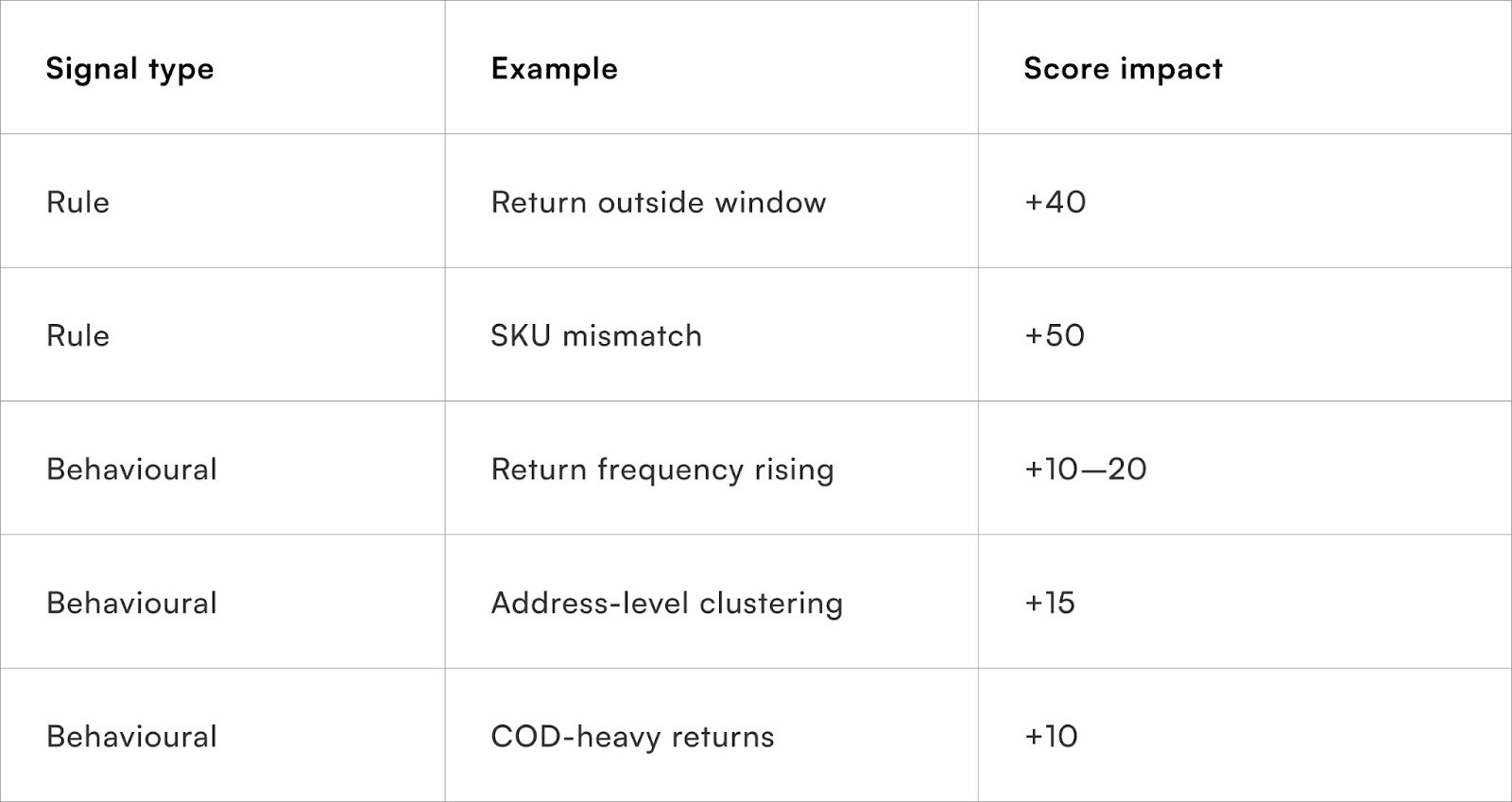

How should a return fraud risk score be structured?

Simple, weighted, interpretable

The goal is not a perfect model, but a useful one.

Start with a bounded score range

Use a 0–100 scale or Low / Medium / High buckets to keep interpretation simple.

Combine rule hits and behavioural weights

Rules can add fixed points; behaviours add weighted points based on severity.

Keep score components visible

Ops and CX teams should see why a score is high. Black-box scores reduce trust and adoption.

How should different teams use the fraud score?

One score, multiple operational decisions

The same score should drive different actions across teams.

Customer experience teams

- Low risk: instant refund

- Medium risk: delayed refund post-quality check

- High risk: manual review or stricter documentation

Operations teams

- High-risk returns routed to specialised QC hubs

- Photo or video verification at pickup

- Avoid doorstep refunds for risky orders

Finance and policy teams

- Monitor fraud loss by cohort

- Adjust thresholds by category or price band

The score is a coordination mechanism, not just a filter.

How do behavioural signals improve over time?

Feedback loops make the model smarter

Behavioural scoring should evolve with outcomes.

Close the loop with return outcomes

Feed back:

- QC results

- Refund reversals

- Confirmed fraud cases

Adjust weights, not rules, first

Rules should change sparingly. Behavioural weights can be tuned weekly or monthly.

Watch for displacement effects

Fraudsters adapt. Monitor for shifts in behaviour, such as switching categories or payment modes.

How should brands roll this out incrementally?

Low-risk pilots before policy-wide enforcement

Phase 1 — Shadow scoring

Score returns without changing outcomes. Compare high-risk scores with actual losses.

Phase 2 — Soft interventions

Apply delays or additional checks only to the highest-risk band.

Phase 3 — Policy integration

Use scores to influence refund SLAs, pickup verification and eligibility.

Incremental rollout builds confidence and internal buy-in.

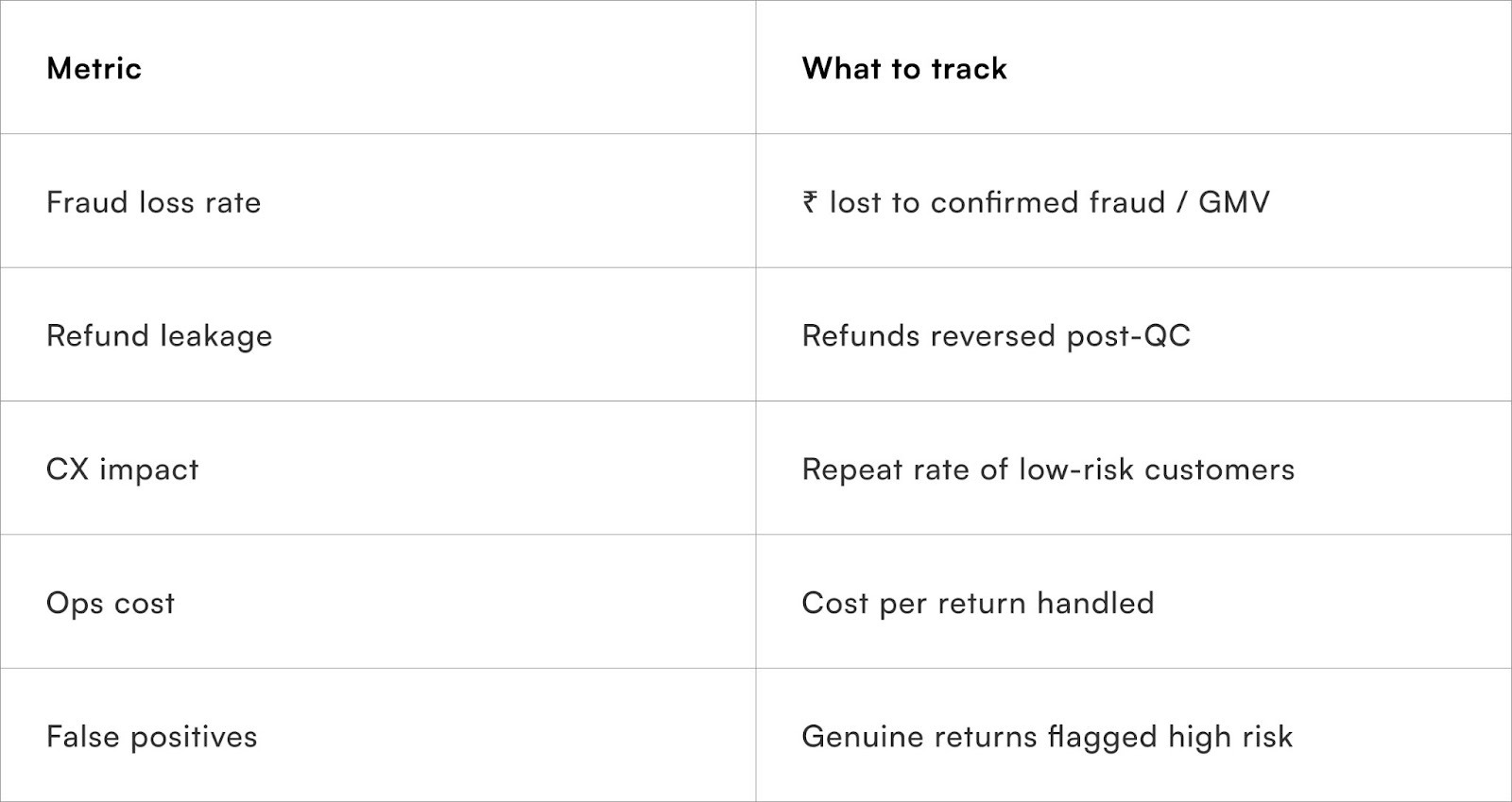

What metrics indicate fraud scoring is working?

Focus on loss reduction, not just fewer returns

Balance fraud reduction with customer trust.

Quick Wins

Build a usable fraud score without heavy modelling

Week 1

Audit return reasons, refund flows and QC outcomes

Identify obvious rule-based risks

Week 2

Define 5–7 behavioural signals

Assign initial weights conservatively

Week 3

Run shadow scoring on live returns

Compare scores with outcomes

Week 4

Introduce soft controls for top-risk band

Review impact weekly

Expected result:

Early visibility into risky cohorts and reduced refund leakage without CX fallout.

To Wrap It Up

Return fraud cannot be eliminated, but it can be managed intelligently. Scoring returns allows brands to respond proportionally, protect margins and preserve trust at the same time.

This week, define 5–7 behavioural signals and start shadow-scoring returns without changing outcomes.

Over time, refine weights, integrate scores into refund and pickup workflows, and continuously learn from outcomes. Fraud prevention works best when it evolves quietly alongside growth.

For D2C brands seeking structured fraud detection across returns,Pragma’s Returns Intelligence platform provides explainable risk scoring, behavioural analysis and workflow controls that help teams reduce refund leakage and operational losses.

.gif)

FAQs (Frequently Asked Questions On Scoring returns for fraud risk: a hybrid rules + behavioural approach)

1. Is return fraud common in Indian D2C?

It is not widespread, but it is concentrated. A small percentage of customers often account for a large share of losses.

2. Will fraud scoring hurt genuine customers?

Not if implemented proportionally. Low-risk customers should experience faster refunds, not slower ones.

3. Do we need ML models to do this well?

No. A weighted rules + behaviour system is effective and easier to operate initially.

4. How often should scores be recalibrated?

Behavioural weights can be reviewed monthly; core rules less frequently.

5. Can this work for COD-heavy brands?

Yes. COD behaviour is a strong signal when combined with frequency and category patterns.

6. Should fraud scores be visible to CX agents?

Yes, with explanations. Hidden scores lead to mistrust and misuse.

7. When should a return be outright blocked?

Only for extreme, repeated violations. Most cases should be handled through friction, not denial.

Talk to our experts for a customised solution that can maximise your sales funnel

Book a demo

.png)